Entropy

Essential AI Math Excel Blueprints

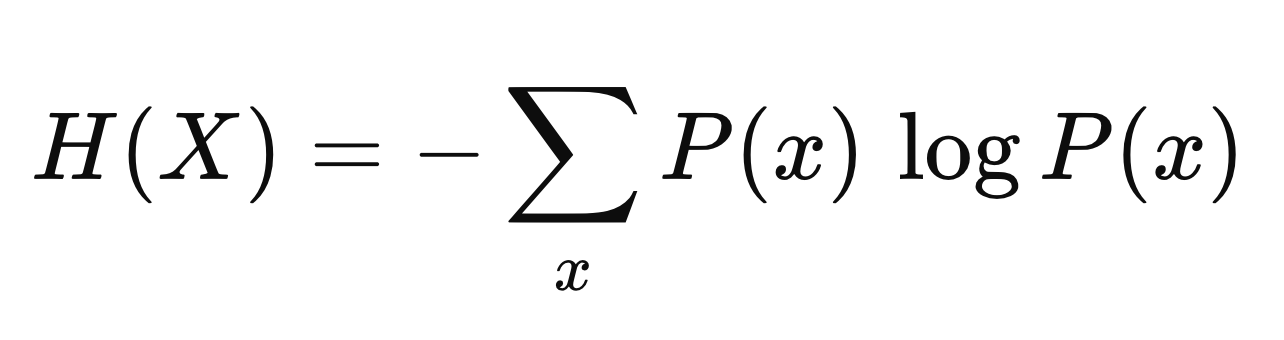

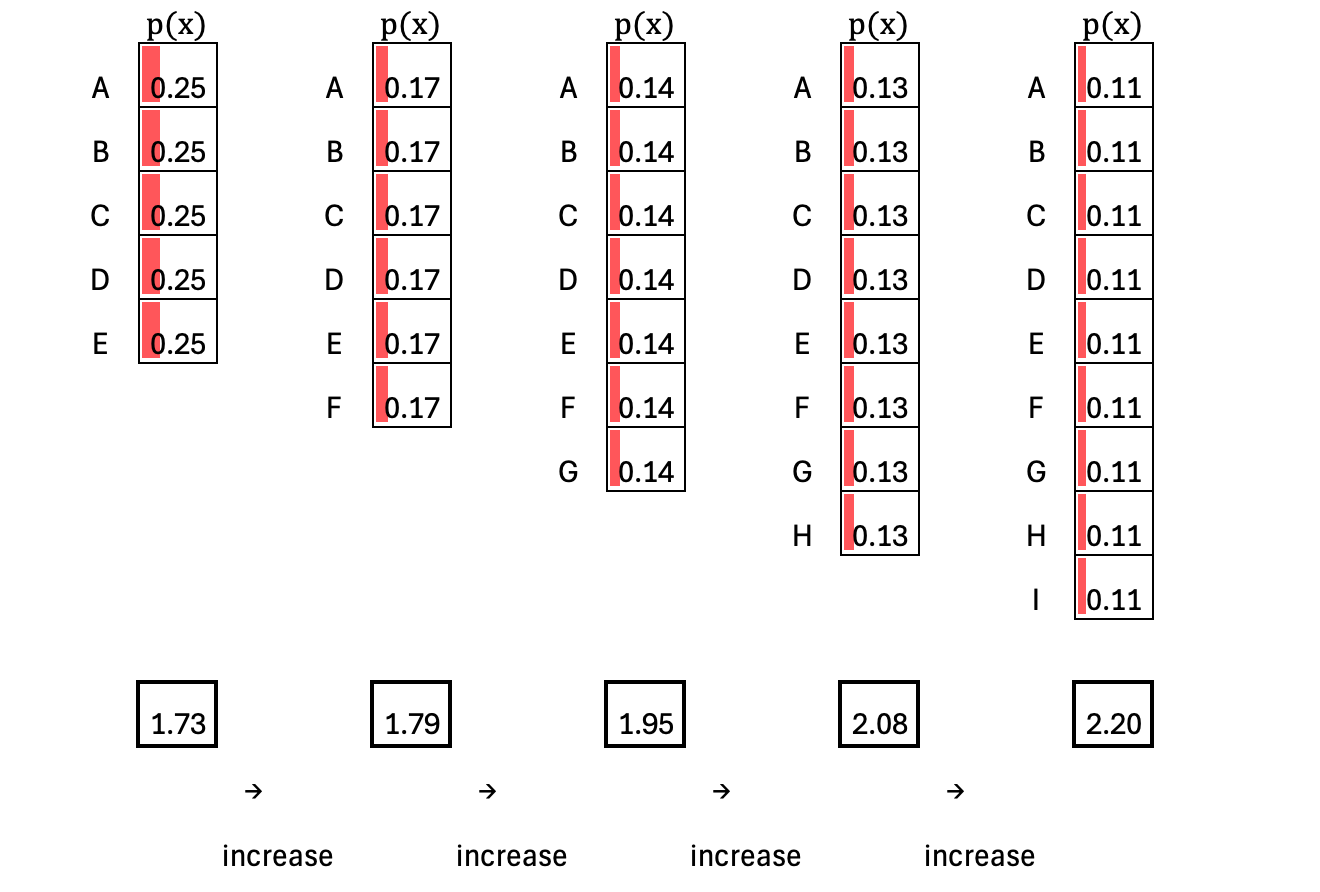

Entropy measures the inherent uncertainty (surprise) of a probability distribution. If one outcome is guaranteed—for example, B occurs with probability 1—there is no uncertainty at all, so entropy is zero. If B is almost certain but not guaranteed, there is still a small amount of uncertainty, so entropy is low. If there are two likely outcomes with comparable probabilities, uncertainty increases and entropy is high. Finally, when all possible outcomes are equally likely, uncertainty is maximized, and entropy reaches its highest value for that set of outcomes.

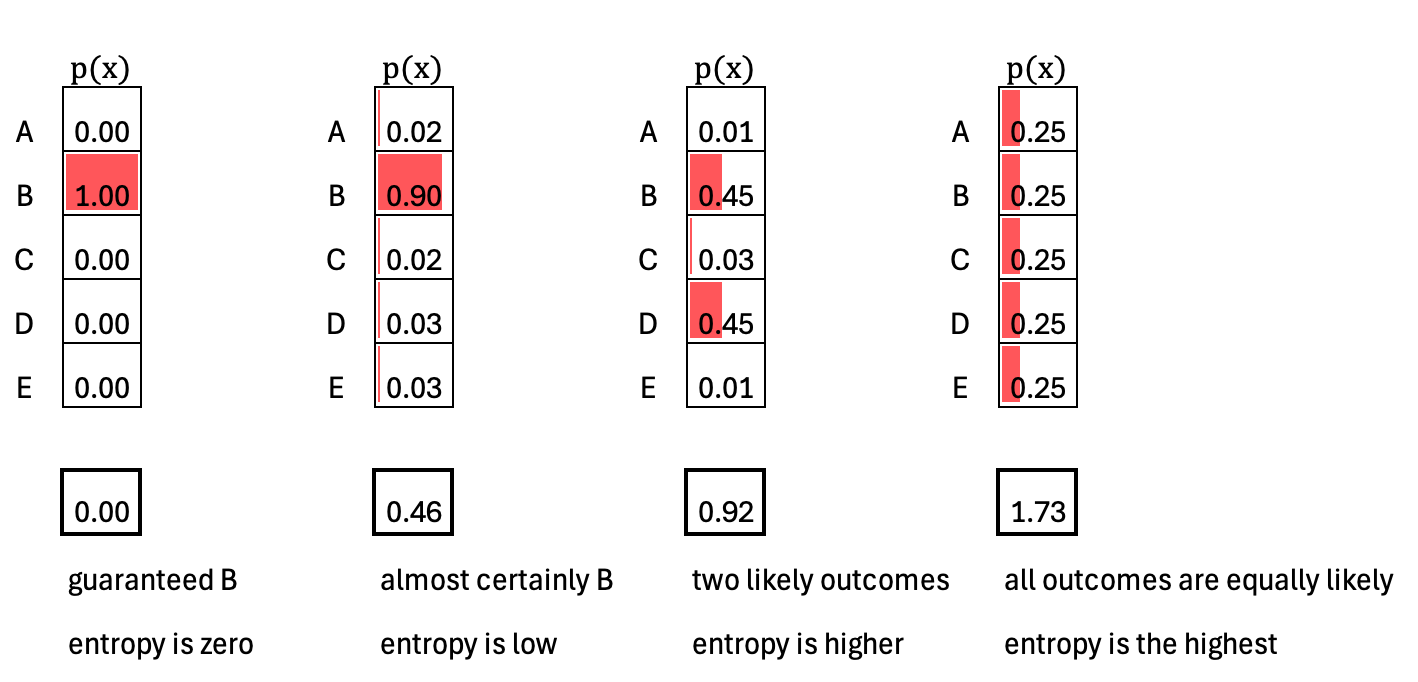

Entropy increases as uncertainty spreads across more possible outcomes. For example, if there are 5 possible outcomes (A–E) and all are equally likely, the entropy is about 1.73. If we expand the space to 9 equally likely outcomes (A–I), there are now more possibilities to distinguish among, so entropy increases to around 2.20. In general, when all outcomes are equally likely—a uniform distribution—entropy reaches its maximum for that fixed number of outcomes.

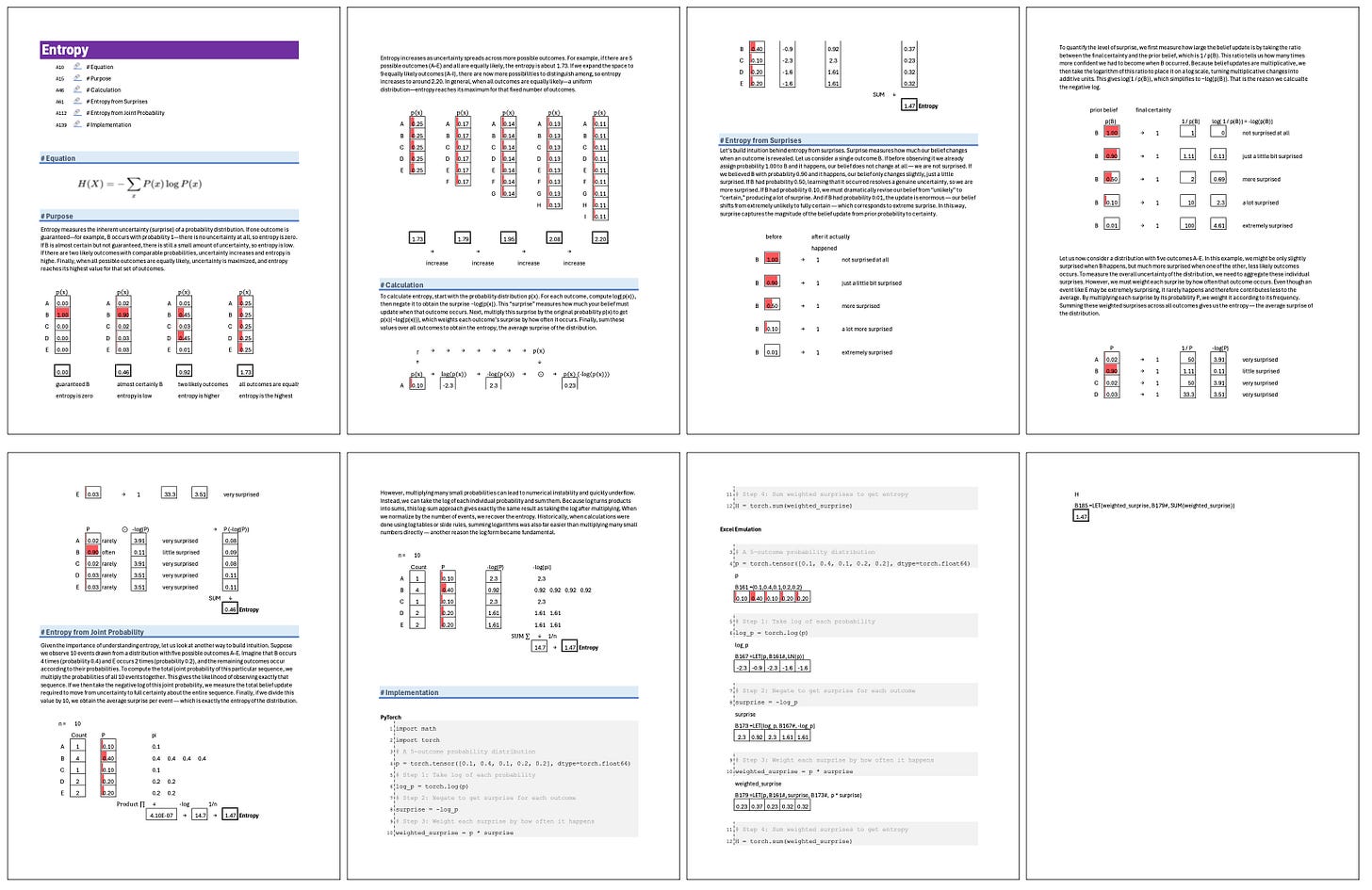

Excel Blueprint

This Excel Blueprint is available to AI by Hand Academy members. You can become a member via a paid Substack subscription.