GLU

Activation series · 11 of 12

Activation › GLU

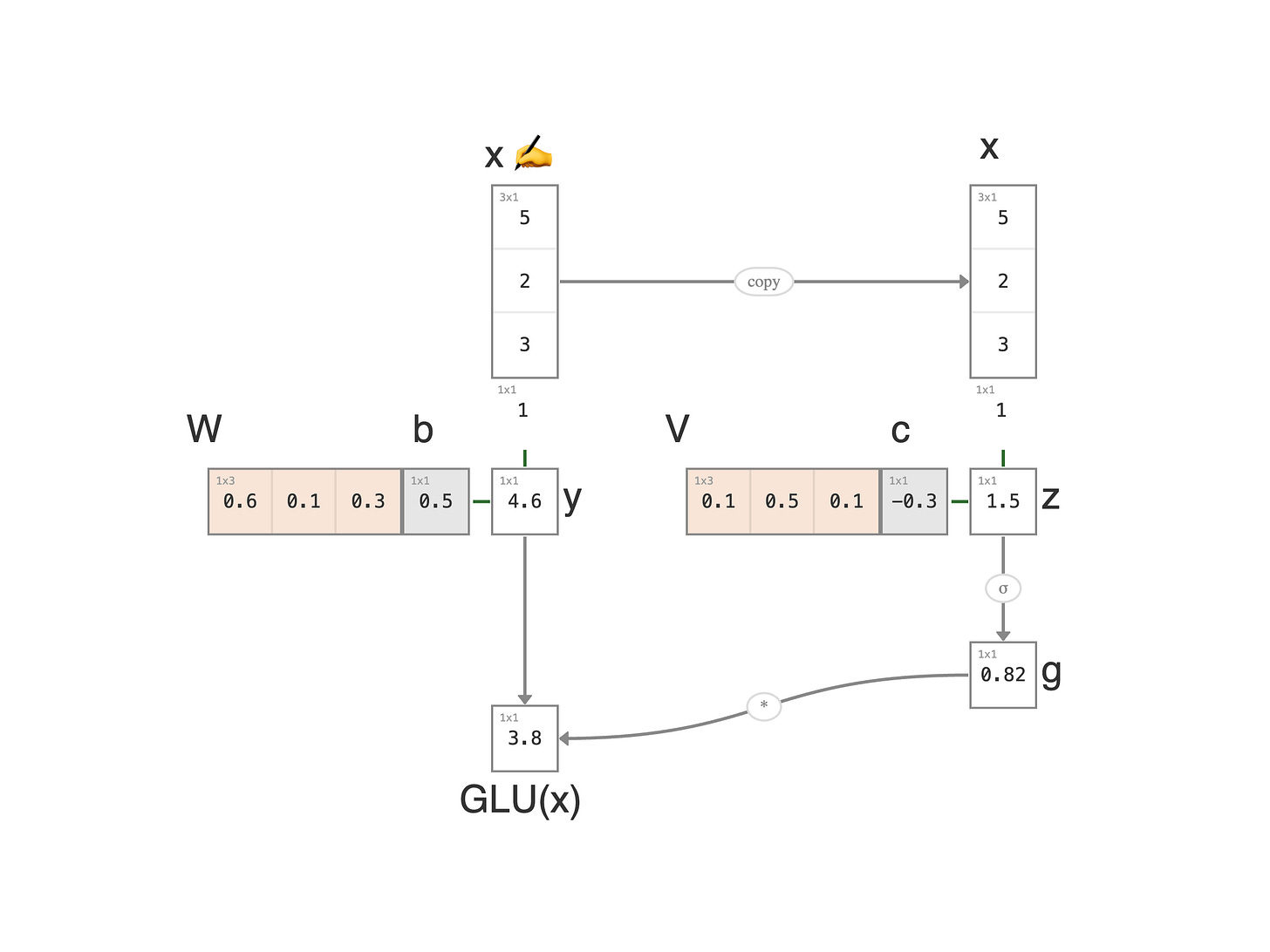

GLU is the first activation in this chapter where the network decides about a value rather than only shaping it. The same input is run through two learned linear transforms (one produces a value, the other produces a 0..1 mask), then multiplied elementwise.

Typhoon Aftermath (3 of 4)

The first two storms in this arc were about measurement: combining damages across categories (LSE), and separating a real storm from a calm baseline (softplus). The next beat is response.

Above the Keelung River, just north of Taipei, sits the Yuanshan Pumping Station. Yuanshan means "round mountain." A low green hill rises right at the river's bend. I used to run along the levy wall there on weekend mornings: a long, quiet park between the river and the city, the pumping station sitting at one end like a stone fortress. On calm days you can walk right up to it, lean against the wall, and forget what it's there for. On typhoon days, those pumps are the only thing keeping downtown dry, pushing water from the inland side back over the levy and out into the river before the streets flood.

When a typhoon's rain forecast lands at the control center, the same incoming data is split into two parallel computations. One stream estimates the incoming water volume (millions of cubic meters about to pile up against the inland side of the wall). The other stream produces a number between 0 and 1: how much pump power to engage. Zero is off; one is full rated capacity. The actual water pushed back over the wall is the multiplication of those two: volume × pump fraction.

That's GLU. Same storm data feeds two learned linear transforms; one branch becomes a value, the other becomes a sigmoid-gated 0..1 control; the two are multiplied elementwise. The network learns over many storms which inflows warrant which openings — the gate is learned, not hand-tuned by the engineer.

It's the difference between a network that reports the weather and a network that turns on the pump. LSE and softplus told us how bad the storm was; GLU decides what to do about it.

Walking through the Math

1. Input: a feature vector `x = (5, 2, 3)`: the storm's rainfall, tide, and wind. We append a 1 at the bottom to absorb the bias into the matrix multiply, a standard trick that lets us treat the weights and the bias as a single weight row.

2. Severity (left branch): y = Wx + b. A learned linear combination of the features plus a bias. The severity row weights rainfall most heavily and adds a bias of 0.5: 0.6 × 5 + 0.1 × 2 + 0.3 × 3 + 0.5 × 1 = 4.6.

3. Gate input (right branch, in parallel): z = Vx + c. A different linear combination of the same features. The gate row weights tide most heavily and applies a slight negative bias: 0.1 × 5 + 0.5 × 2 + 0.1 × 3 + (-0.3) × 1 = 1.5.

4. Sigmoid gate: g = σ(z) ≈ 0.82, a number between 0 and 1 saying how much of the severity to pass through.

5. Multiply: o = y × g = 4.6 × 0.82 ≈ 3.8. The GLU output: severity scaled by the gate's decision.

Steps 2 and 3 happen in parallel; the two branches never see each other until the multiply at step 5. That single multiplication is where the gating actually happens; everything before is just two independent linear layers.

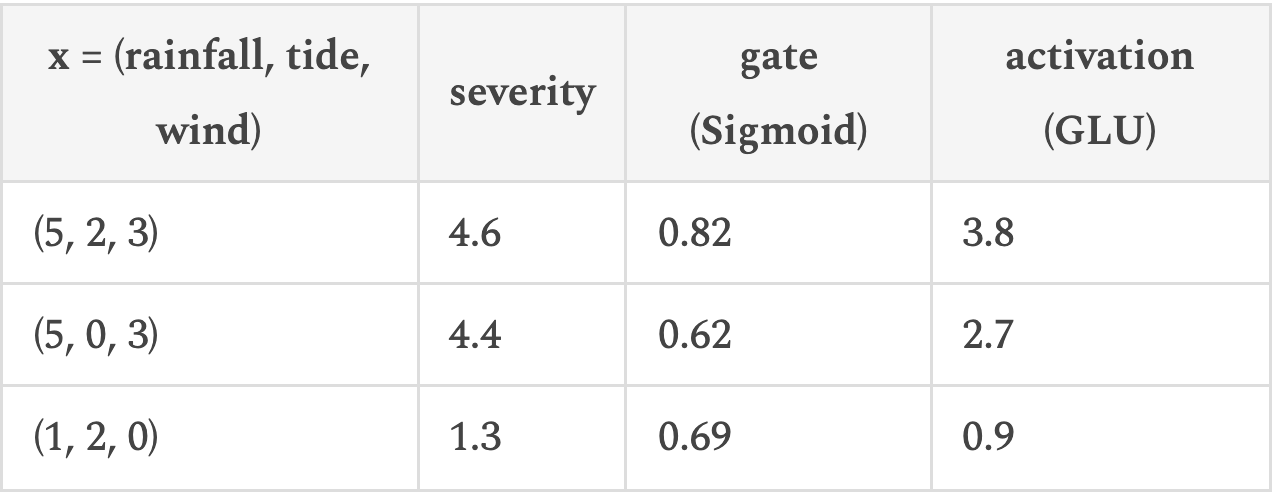

Reading the Numbers

The control center pulls in three features for any given storm: rainfall intensity, tide level above normal, and wind speed. Each storm is a feature vector `x = (rainfall, tide, wind)`. Both branches see the same `x`, but each branch learns its own weights. The severity branch ends up weighting rainfall most heavily. Rain is the incoming water. The gate branch ends up weighting tide most heavily. At high tide the river can't drain into the sea naturally, so the pumps need to engage with urgency.

The first row is a heavy storm at high tide. Both branches fire; pumps run hard. The second is the same storm at low tide. The severity barely changes, but the gate drops sharply because the river can drain into the sea on its own. The third is light rain at high tide. Severity is small, the gate is moderate, the pumps barely run.

A plain linear layer would only give us the severity column: three numbers proportional to the input, no context awareness. The gate column is the whole reason GLU exists: it lets one feature (tide) decide whether another (rainfall) actually triggers a response.

← Previous:

Softplus

Next:

SwiGLU →