ReLU

Activation series · 1 of 4

Activation › ReLU

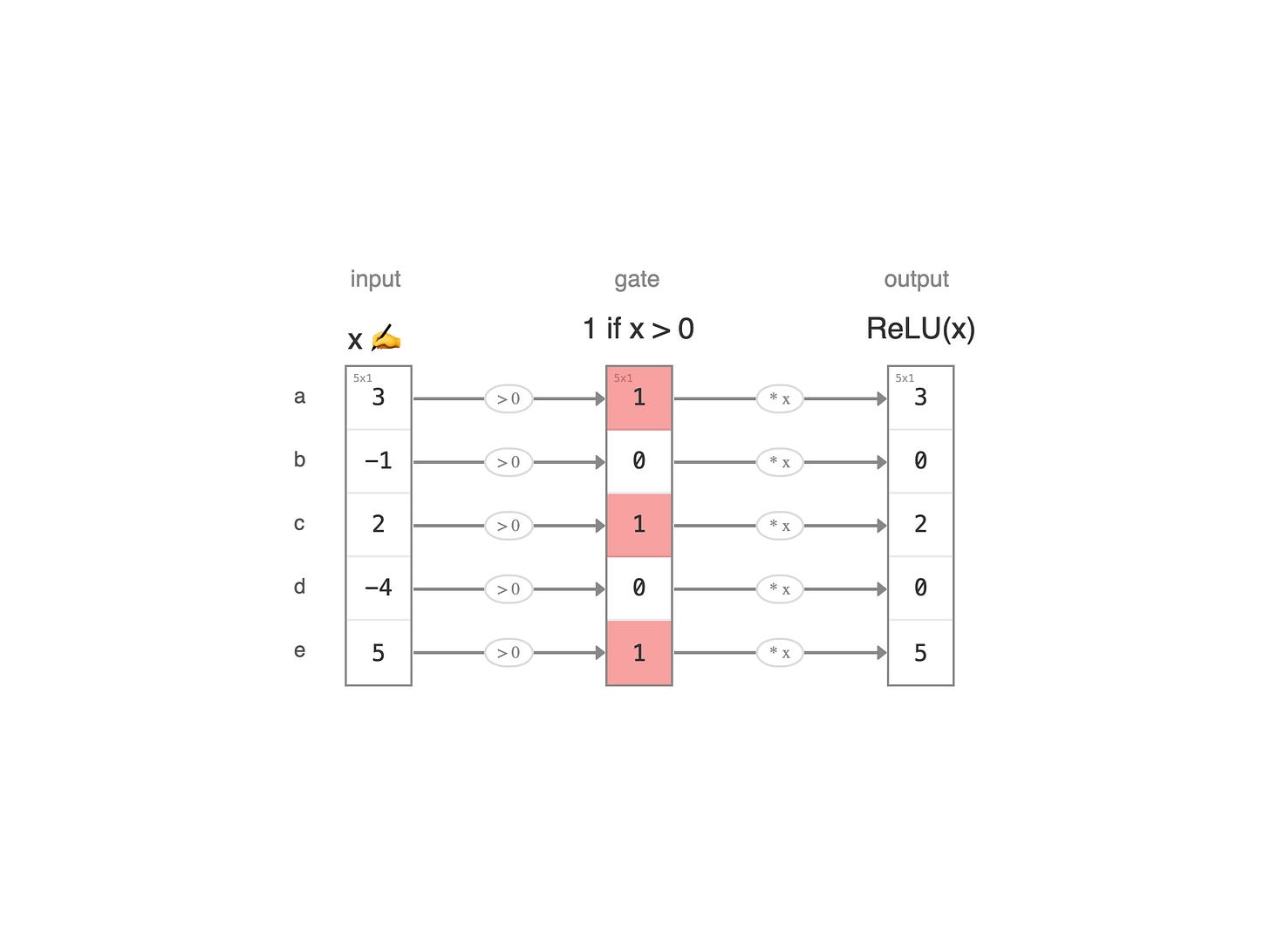

ReLU is the default activation in modern deep learning — cheap to compute, and stable enough to train networks hundreds of layers deep. To see what it does, picture five boba tea shops on the same block — `a`, `b`, `c`, `d`, `e` — each running their own books.

Each value is a shop's monthly profit — receipts minus rent, ingredients, and wages. When profit is positive, the shop stays open and the owner pockets every dollar. When profit turns negative, the shop runs out of cash and shutters — the lights go off, the books are wiped to zero. ReLU is exactly that rule, applied one shop at a time.

Read the diagram left to right. The first column is the raw value x — each shop's profit at month's end. The second column is the gate: 1 if the shop is open (x > 0), 0 if it has shuttered. The last column is the ReLU output: open shops pass their profit through untouched, while shuttered ones are zeroed out.

Five rows means five parallel shops on the same block, each evaluated independently. That's why ReLU is called an element-wise activation: every neuron decides its own fate.

Next:

Leaky ReLU →