Leaky ReLU

Activation series · 2 of 4

Activation › Leaky ReLU

Plain ReLU wipes negative values to zero — clean, but a shop that shutters can never recover, since both its output and its gradient stay pinned at zero. This is the dying ReLU problem, and in deep networks it can quietly kill a meaningful fraction of the units.

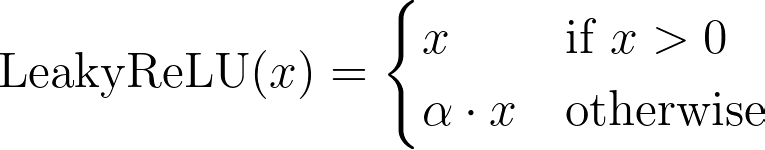

Leaky ReLU is the one-line fix: instead of shuttering, the shop files for Chapter 11 protection and keeps the lights on at reduced capacity. Its debt is restructured down to a fraction α (typically 0.1) — the rest is forgiven, and the shop is wounded, not killed. A small negative signal still flows through, so the gradient survives, and the shop can crawl back to life if a TikTok goes viral.

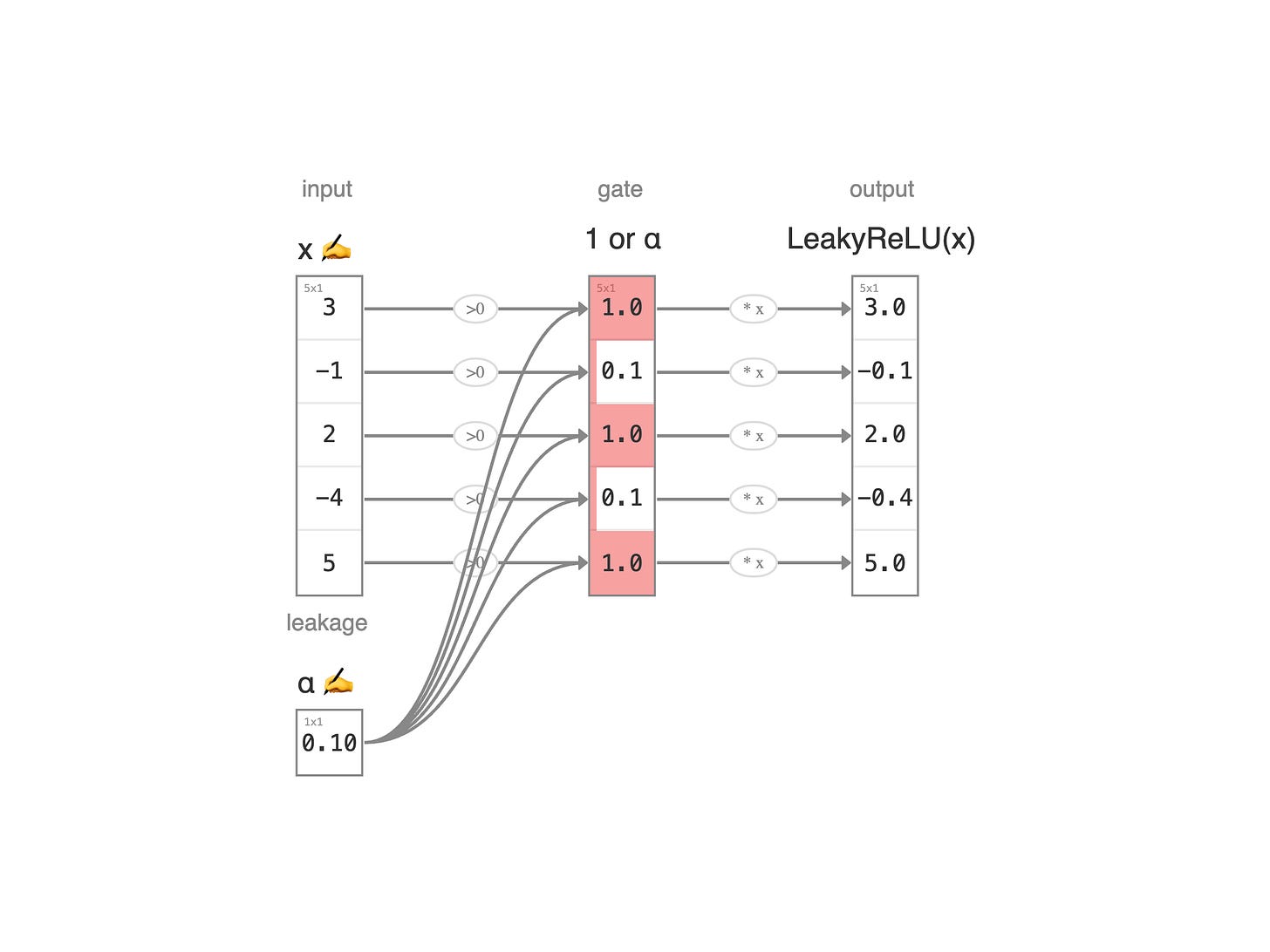

Read the diagram left to right. The first column is the raw value x — each shop's profit at month's end. The second column is the leakage α — the fraction of the loss held over after restructuring (default 0.1, editable). The third column is the gate: 1 for shops still in the black, α for those operating under bankruptcy protection. The last column is the Leaky ReLU output: y = x · gate. Profitable shops pass through untouched; struggling ones shrink by a factor of α but still carry a sign.

Five rows means five parallel shops, each evaluated independently. Like ReLU, this is an element-wise activation: every neuron's fate is decided on its own merits.

← Previous:

ReLU

Next:

Softmax →