Softmax

Activation series · 3 of 4

Activation › Softmax

Softmax is how deep networks turn raw scores into a probability distribution — the final layer of every classifier, and the core of every attention head in a transformer. To see what it does, picture five boba tea shops on the same block, all competing for your dollar. Five candidates: a, b, c, d, e — different chains, different brewing styles, different pearls. A boba reviewer hands you a chewiness score for each — higher means perfectly chewy "QQ" pearls with the right bite (ask a Taiwanese friend to find out what QQ means). Negative scores are real: mushy bobas, overcooked pearls, a batch left sitting too long.

How do you turn five chewiness scores into an allocation that adds to a whole dollar? You could spend everything at the chewiest shop, but that ignores how good the runners-up are. Softmax is the smooth alternative.

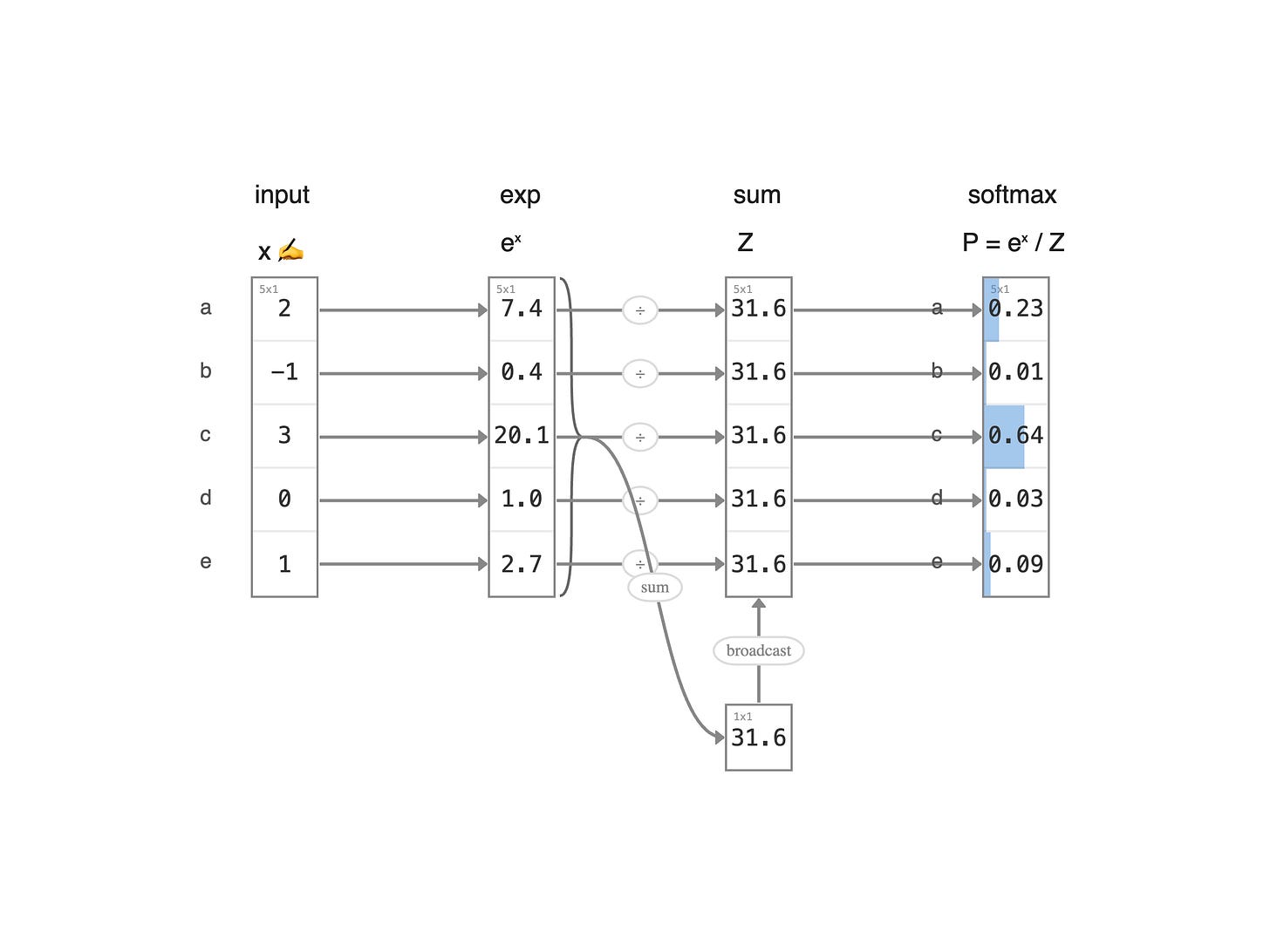

Read the diagram left to right. First, raise each score to e^{x} — this does two things: it turns negative chewiness into small positives, and it stretches the gaps between scores exponentially. Then sum all five into a single total Z. Finally, divide each e^{x} by Z to get a probability. The five probabilities add up to one, so you can read them as percentages of your dollar. The chewiest shop gets the biggest slice — but never the whole dollar. That's the point of softmax: it ranks confidently while still leaving room for the others.

← Previous:

Leaky ReLU

Next:

Sigmoid →