Sigmoid

Activation series · 4 of 4

Activation › Sigmoid

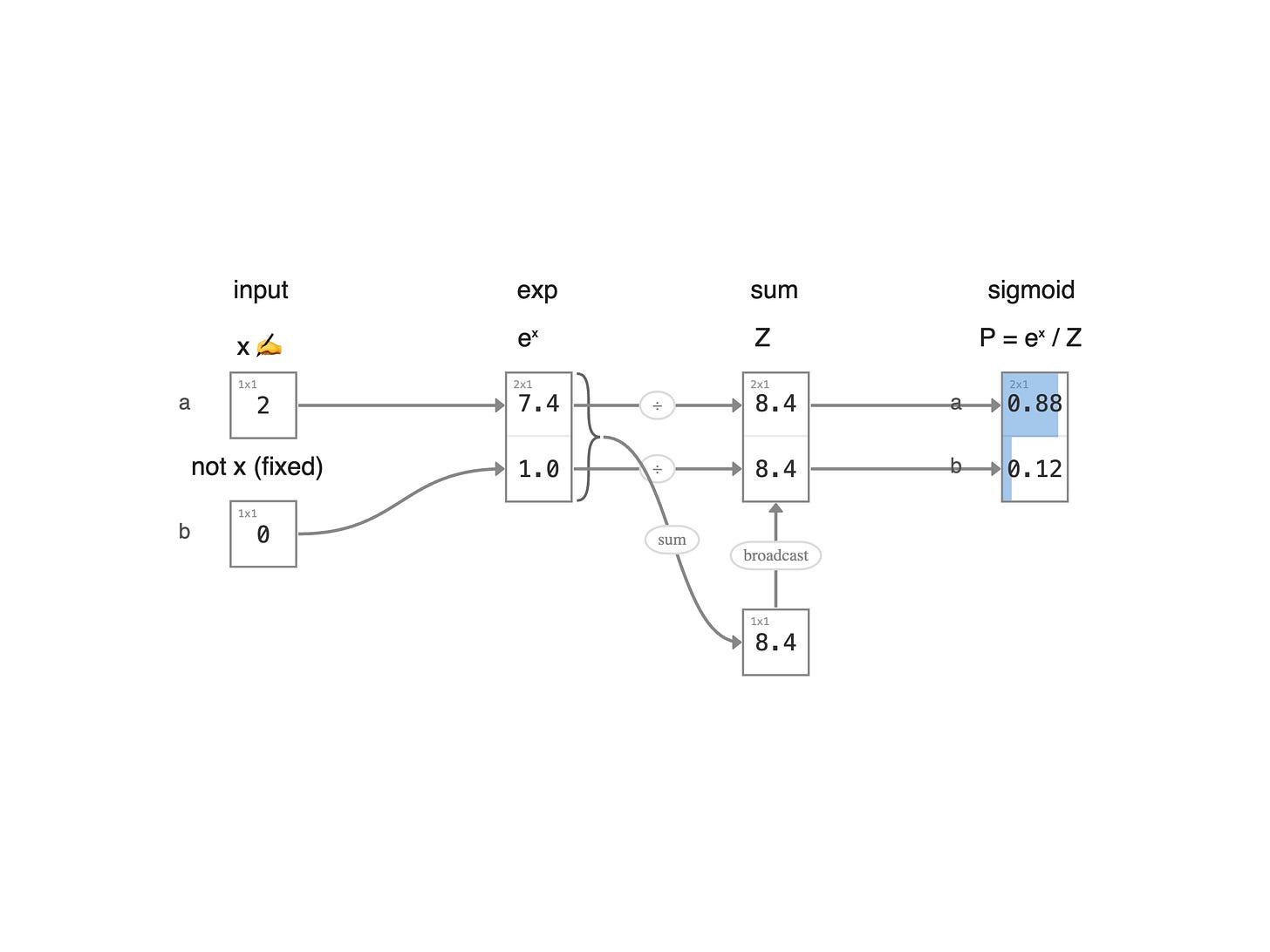

Sigmoid squashes any real number into a probability between 0 and 1 — the classic activation for binary classification, and still the gating function inside LSTMs and GRUs. Same boba block as the previous Softmax example, narrowed to just two contenders — a hot new shop `a` with chewiness score x, and your usual go-to `b` whose score is pinned at zero (the neutral baseline you've come to expect).

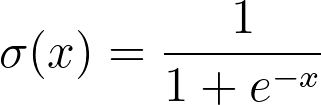

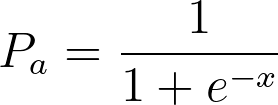

Sigmoid is just softmax with two players, one of them pinned to zero. Apply softmax to the pair [x, 0] — the probability for `a` is:

Since e^0 = 1, this simplifies:

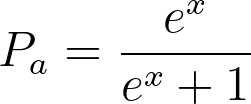

Multiply top and bottom by e^{-x} to get the canonical form:

That's sigmoid.

Read the diagram left to right. First, raise each score to e^{x} — for the usual shop `b` whose score is zero, this is just e^0 = 1 (the constant baseline). Then sum the two into a total Z. Finally, divide each e^{x} by Z to get a probability. The two probabilities add up to one — the new shop wins more of your dollar when its pearls get chewier, and your usual keeps the rest. That's the point of sigmoid: it turns a single chewiness score into a clean 0-to-1 chance you'll try the new place over your usual.

← Previous:

Softmax