Seminar next week ~ Google's Gemma 4

Frontier Model Seminar Series #1

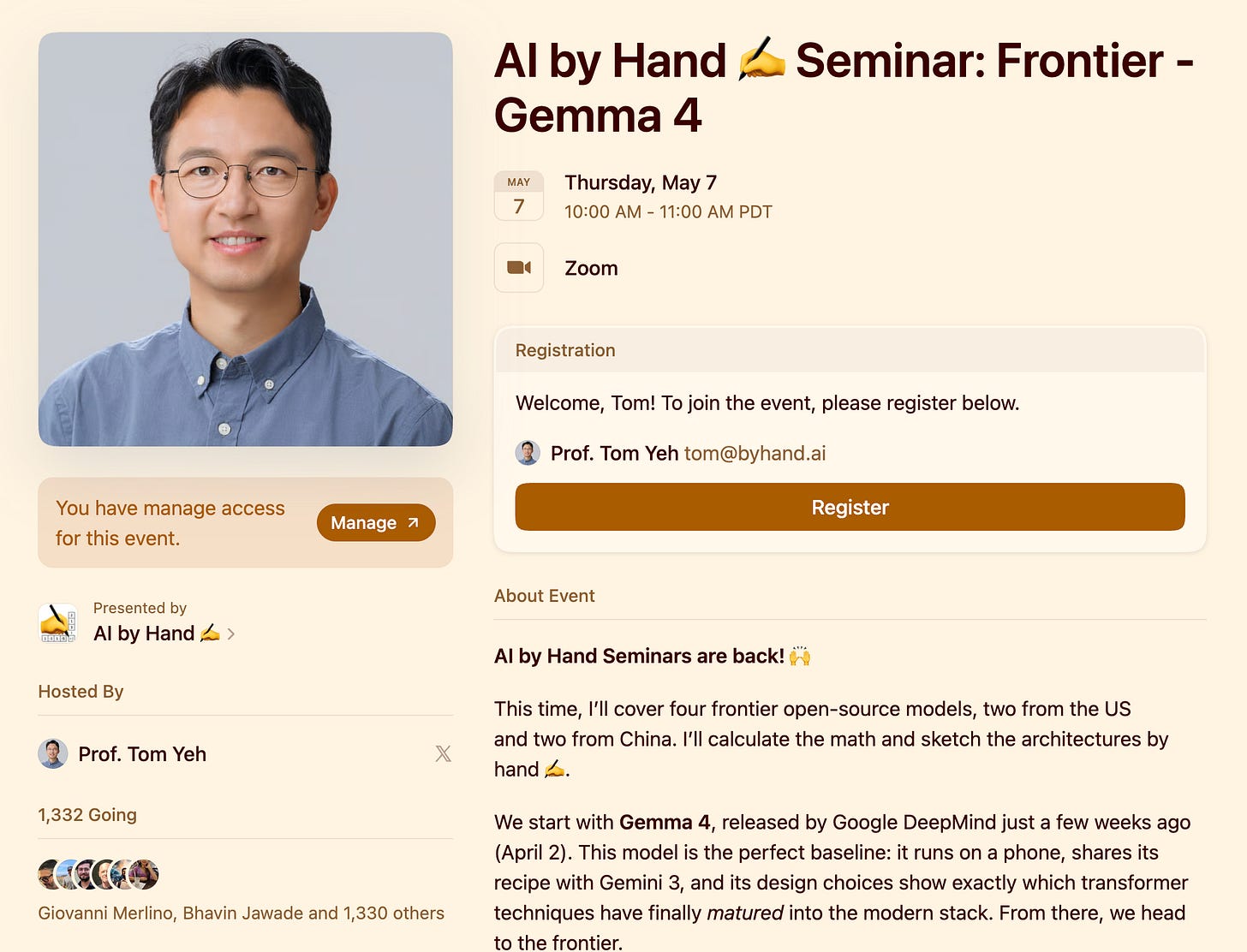

AI by Hand ✍️ Seminars are back! 🙌

This time, I’ll cover four frontier open-source models, two from the US and two from China. I’ll calculate the math and sketch the architectures by hand ✍️.

We start with Gemma 4, released by Google DeepMind just a few weeks ago (April 2). This model is the perfect baseline: it runs on a phone, shares its recipe with Gemini 3, and its design choices show exactly which transformer techniques have finally matured into the modern stack. From there, we head to the frontier.

Date: Thursday, May 7

Time: 10am (Pacific Time)

Upcoming Seminars

Week 2 — Qwen 3.5 (Alibaba, China): long-context scaling techniques and attention optimizations improving practical context length.

Week 3 — Nemotron 3 Super (NVIDIA, US): large-scale transformer systems, with emerging directions toward state-space alternatives like Mamba.

Week 4 — DeepSeek-V4 (DeepSeek, China): a 1.6T-parameter MoE with Compressed Sparse Attention for 1M-context and three test-time reasoning modes, advancing chain-of-thought at the open-source frontier, released just in-time for this special seminar series. 😉

Previous Seminars

🔥 PPO→DPO→GRPO→Rubrics (2/26/2026)

🔥 OpenClaw - 12 Stages of Evolution from the Transformer (2/19/2026)

🔥 Transformer - Six Levels of Understanding (2/12/2026)

Meta Superintelligence Labs vs Facebook AI Research (2/5/2026)

9 AI Eval Formulas (1/29/2026)

Google Ironwood TPU: From Bits to HBM (1/22/2026)

How AWS Uses Small Models Learn Tool Use (1/15/2026)

Attention (1/15/2026)

DeepSeek’s Manifold-Constrained Hyper Connection (mHC) (1/8/2026)

Introduction to Generative AI (1/8/2026)

Gated Attention (NeurIPS 2025 Best Paper) (12/16/2025)