Pretrain vs Fine-Tune

Fine-Tuning series: 2 of 8

Fine-Tuning Series:

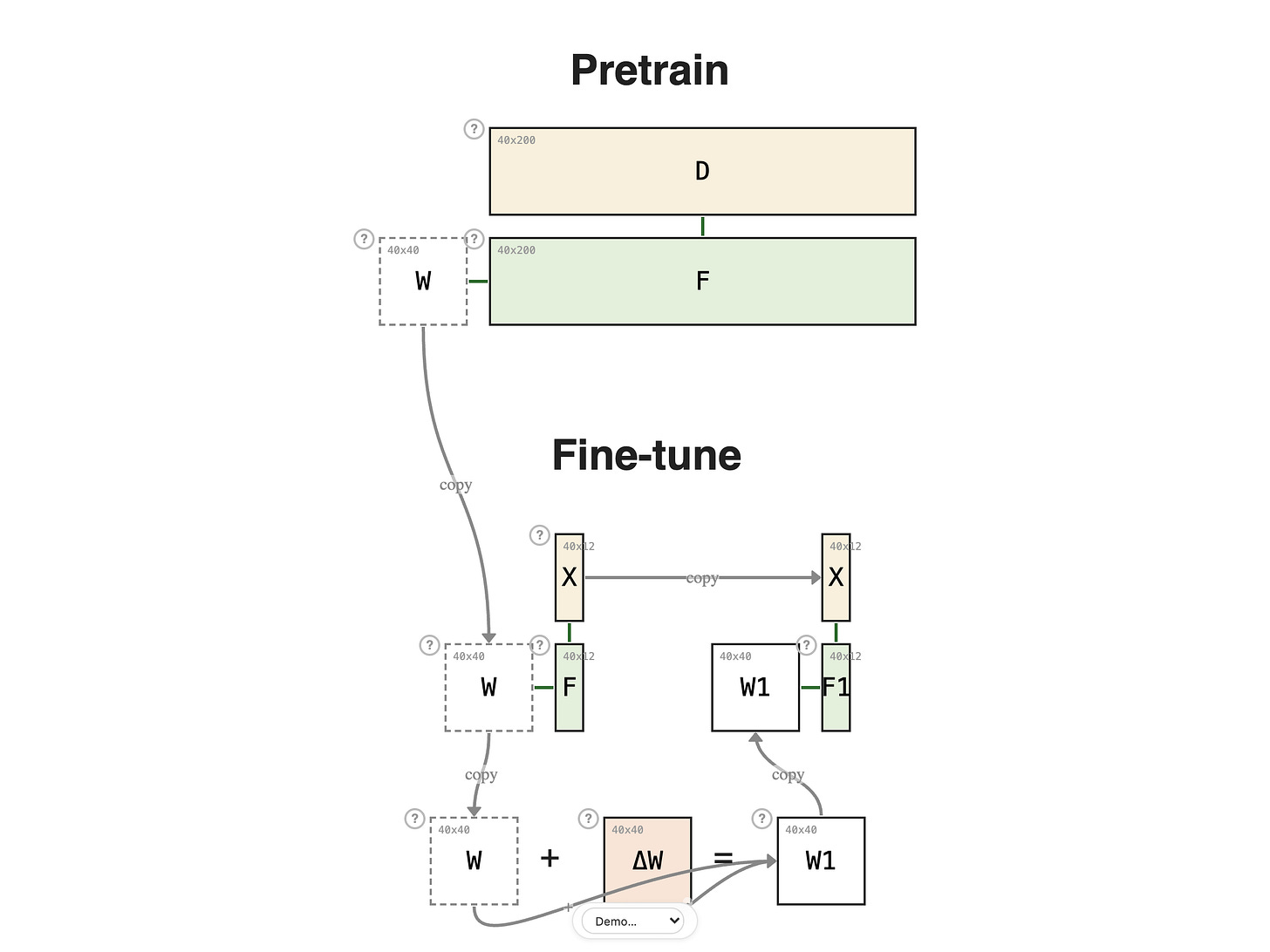

The previous lesson showed W + Δ W = W1 as a single abstract step. That same step shows up in two very different settings — and the setting is what separates pretraining from fine-tuning.

In pretraining, the dataset D is massive and general-purpose — say 200 examples per batch, multiplied across billions of steps. Each ΔW is tiny, but the cumulative effect is a weight matrix that has absorbed broad patterns of the data: W is now the model's foundational knowledge.

In fine-tuning, the dataset is much smaller — maybe just 12 task-specific examples. You don't want to throw out everything W has learned, and 12 examples is nowhere near enough to relearn it from scratch anyway. You want to add a small specialization on top.

I once told my master's students, jokingly, "You are all fine-tuning here." You don't go back to re-learn calculus — you build on the undergraduate foundation and specialize. Fine-tuning does the same for a model: keep the foundational W, and add a small correction on top.

Concretely, freeze W and run task data X through it:

The output F is reasonable but generic — W has never seen your task, so the prediction is whatever a general-purpose model would say. To specialize, we add an adjustment — a new matrix ΔW, same shape as W (40 × 40), trained only on the task data while W stays put:

Now the forward pass uses the fine-tuned weight:

ΔW is the entire artifact of fine-tuning — the per-task adjustment learned from 12 examples, layered on top of months or years of pretraining.

Next:

3. Full Fine-Tuning