Weight Update

Fine-Tuning series: 1 of 8

Fine-Tuning Series:

When a neural network learns, what actually changes? Not the architecture — the shape of the network stays fixed. Not the inputs — those come from outside. What moves is the weights.

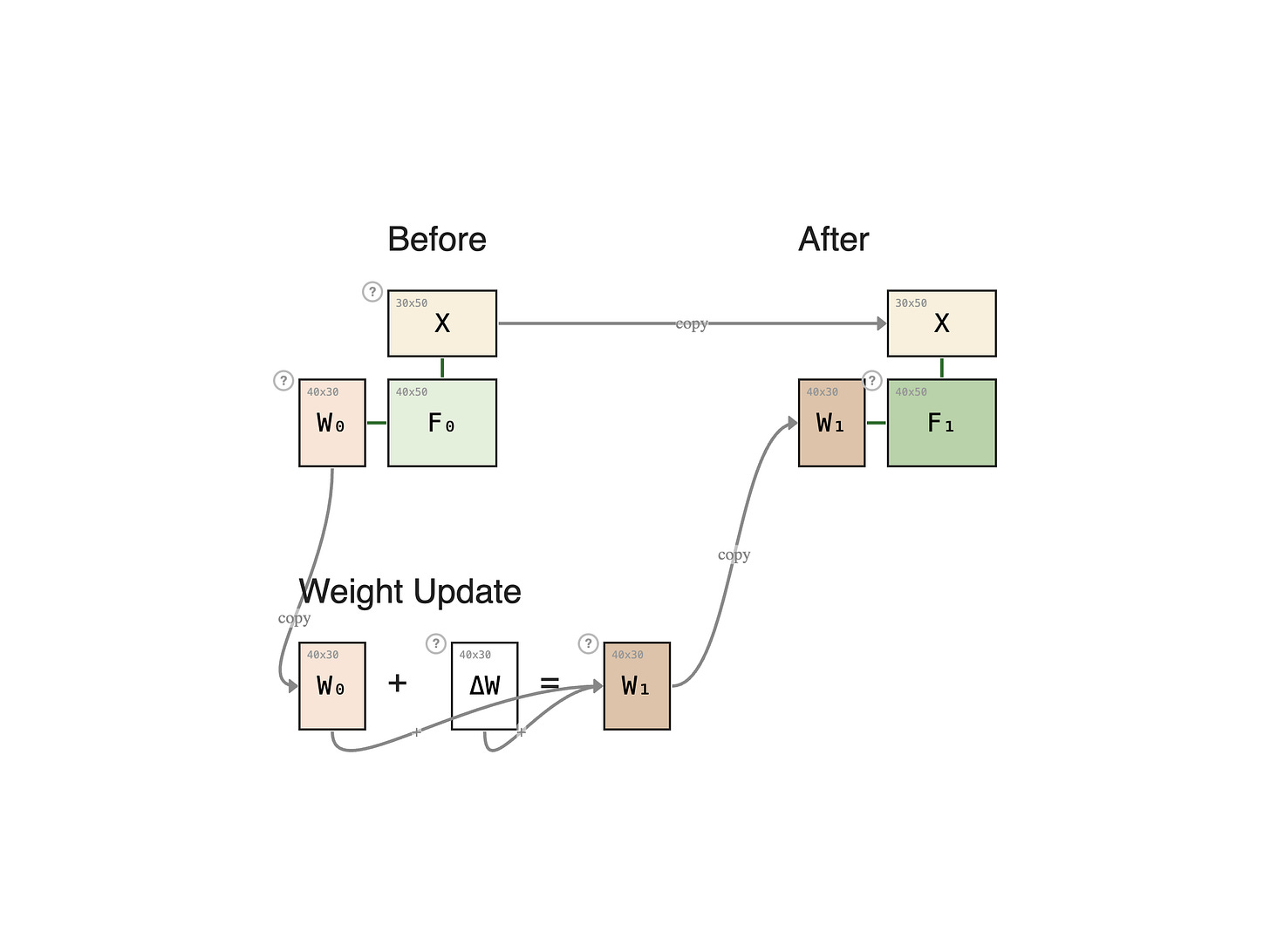

A training step takes the current weight matrix W0, computes a small nudge Δ W from a batch of examples, and adds the two together to produce the new weights:

This single equation — add a correction to the existing weights — underlies everything in this chapter. Pretraining, full fine-tuning, frozen layers, adapters, LoRA: they're all variations on who gets a ΔW and how big that ΔW is allowed to be.

The diagram below shows it for one layer. Before the step, the forward pass uses the old weight:

After the step, the same input flows through the new weight:

ΔW always has the same shape as W0 — for a 40 × 30 weight matrix, ΔW also has 1200 values, and every one of them is a free parameter learned by gradient descent.

Repeat this step billions of times across a massive general-purpose corpus, and you get a pretrained model: weights that encode broad patterns of language, code, and reasoning.

The same W + Δ W step can serve very different goals. When the dataset is huge and the goal is to absorb general patterns, we call it pretraining. When the dataset is small and the goal is to specialize an already-pretrained model, we call it fine-tuning. The next lesson puts those two side by side.