Fine-Tuning: the series

8 interactive diagrams

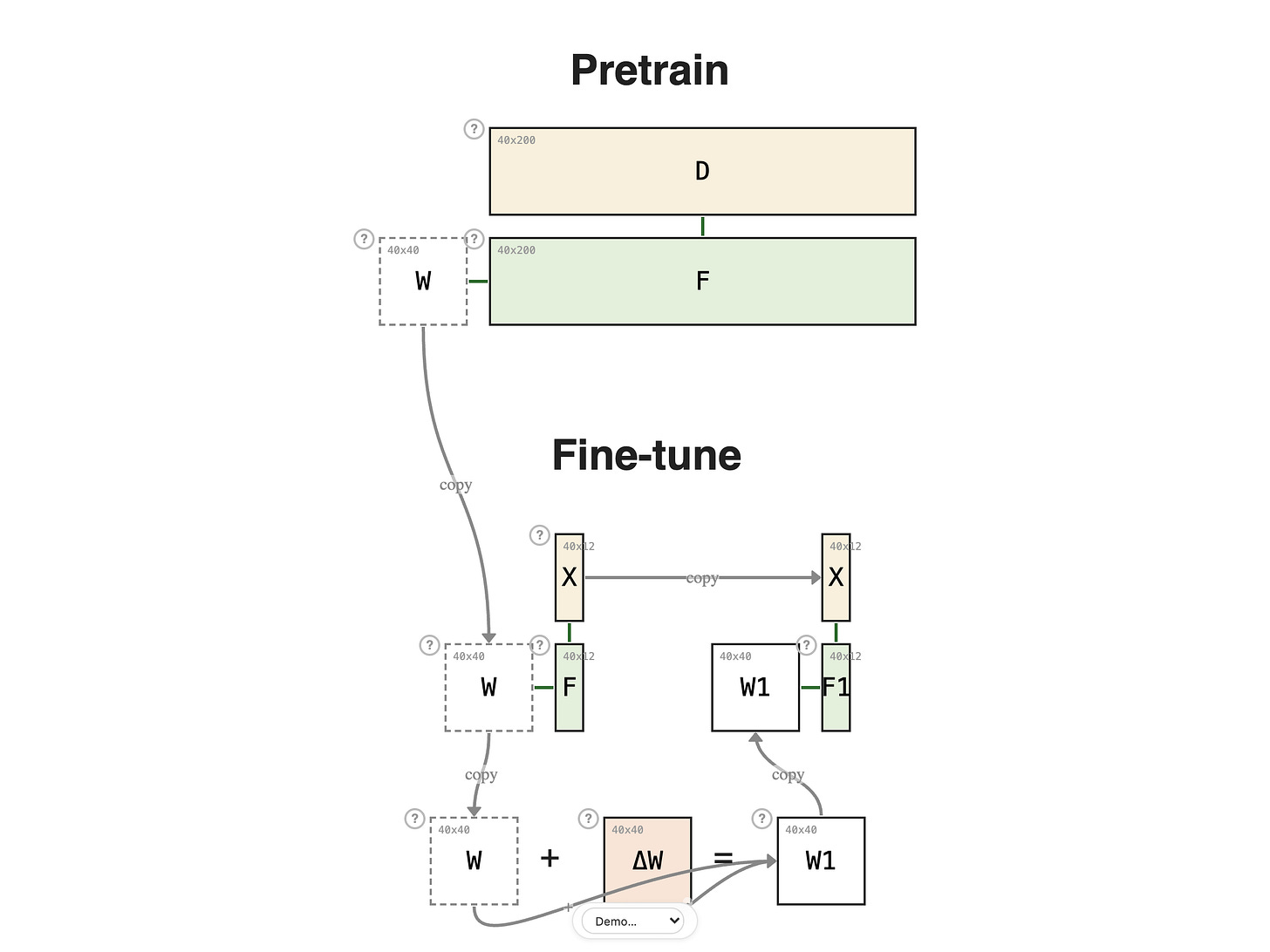

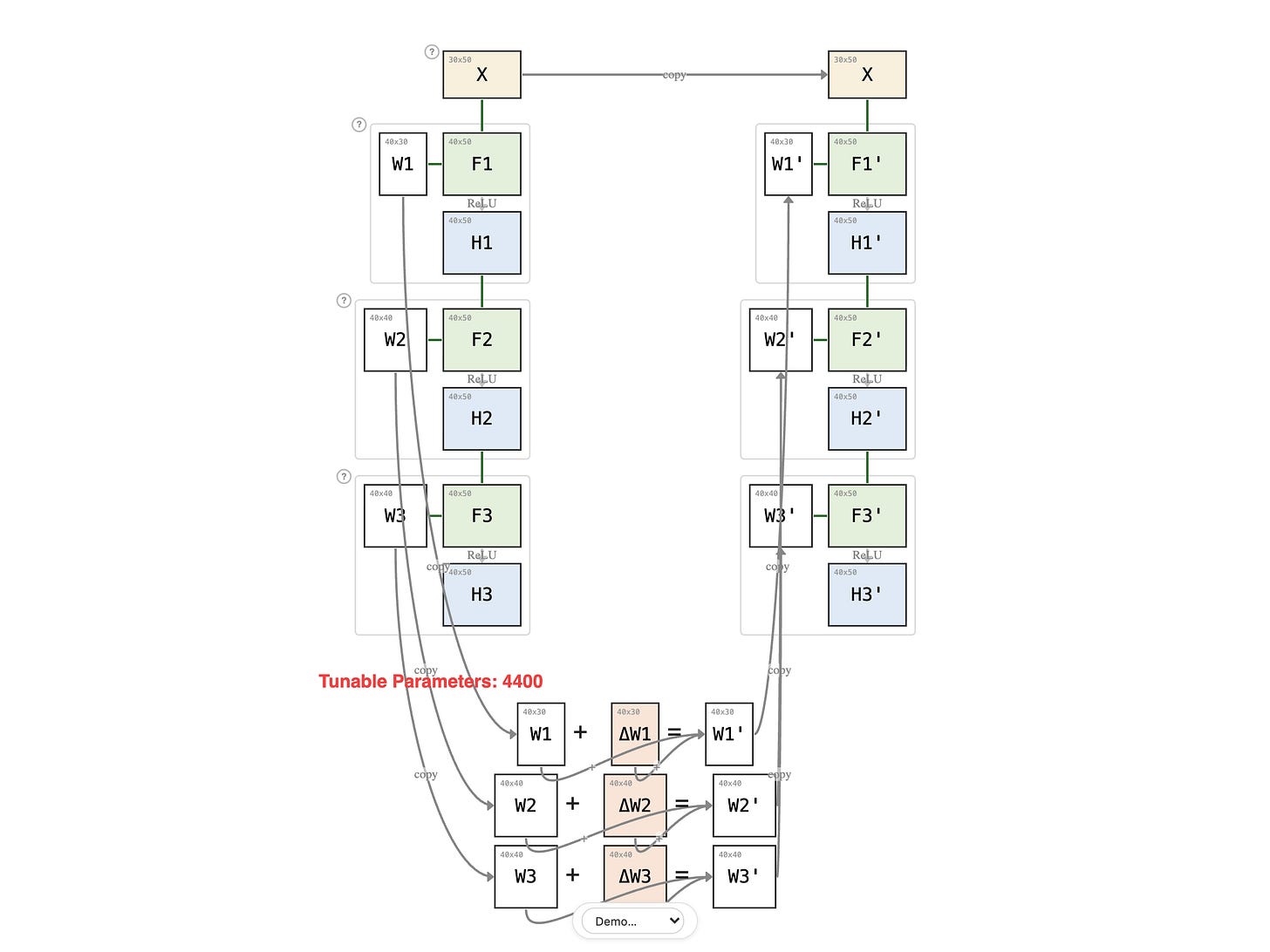

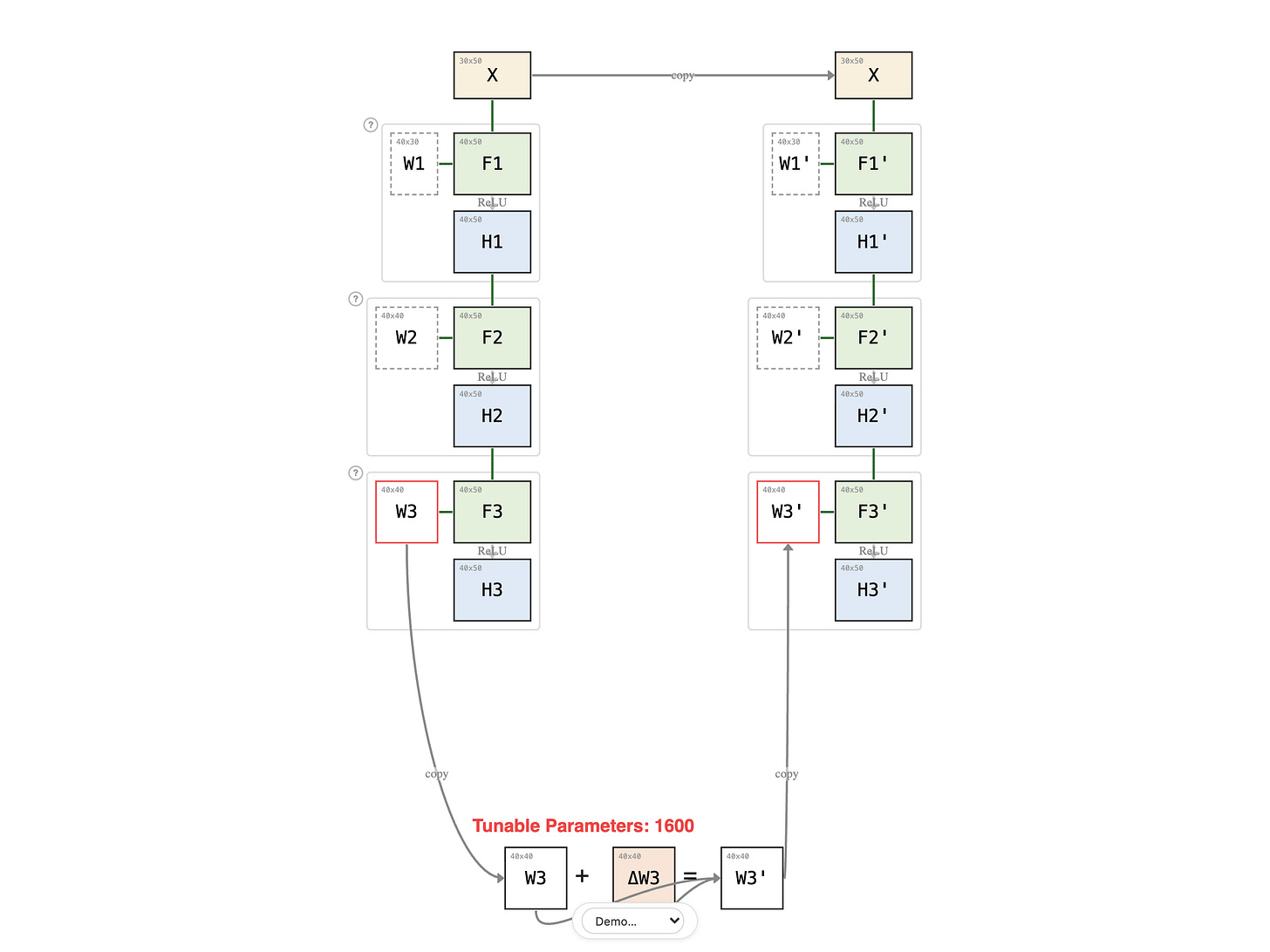

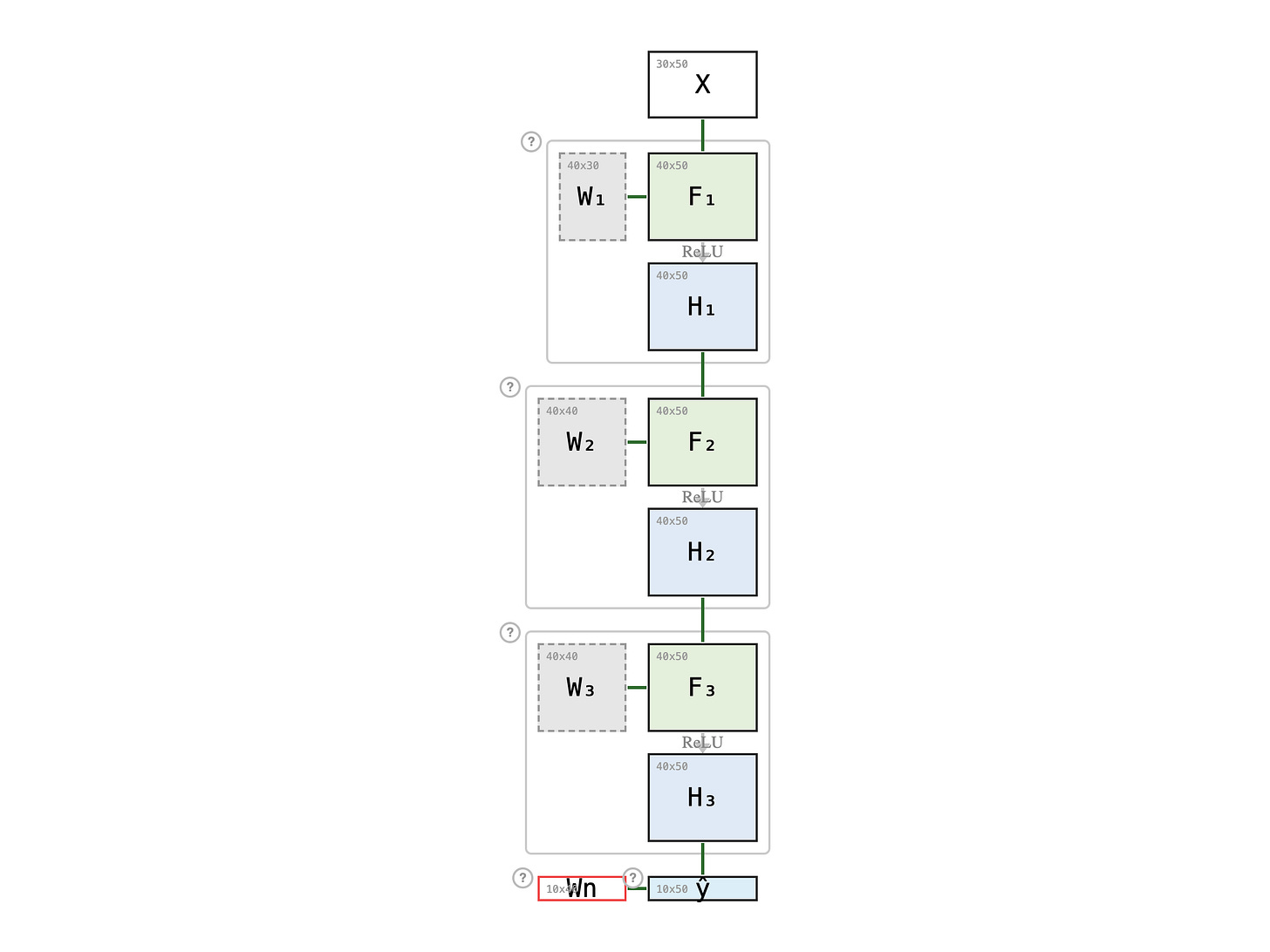

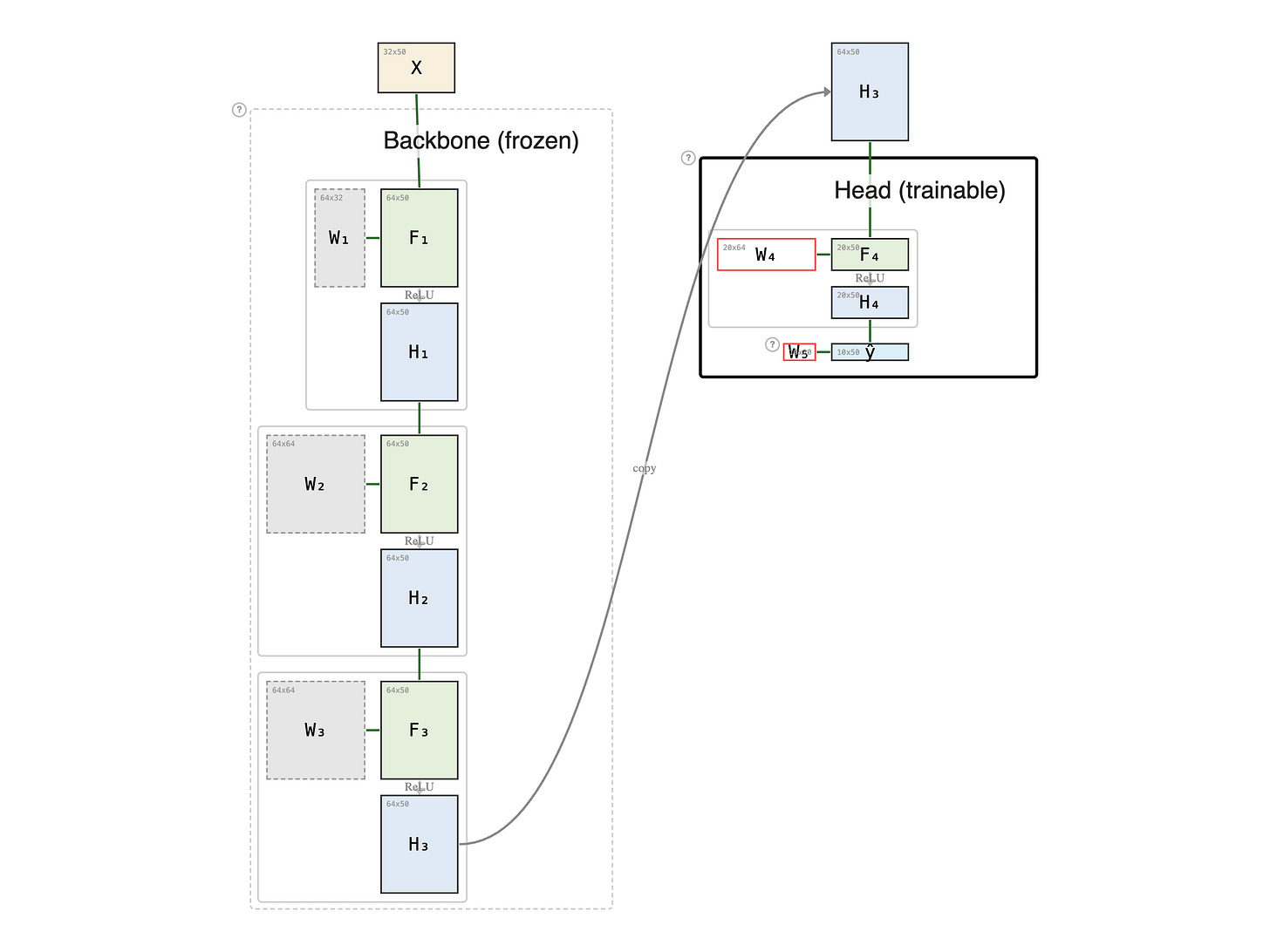

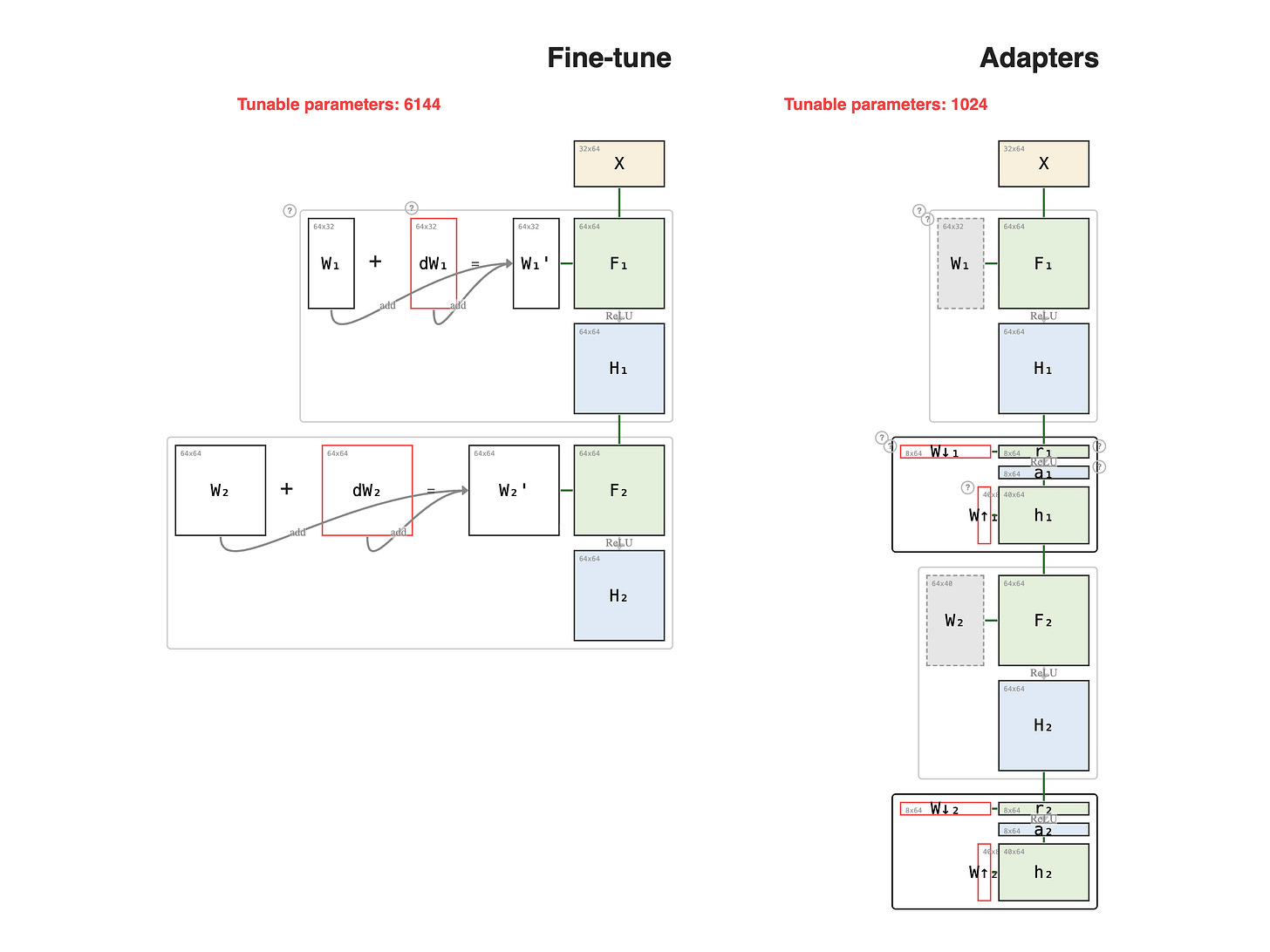

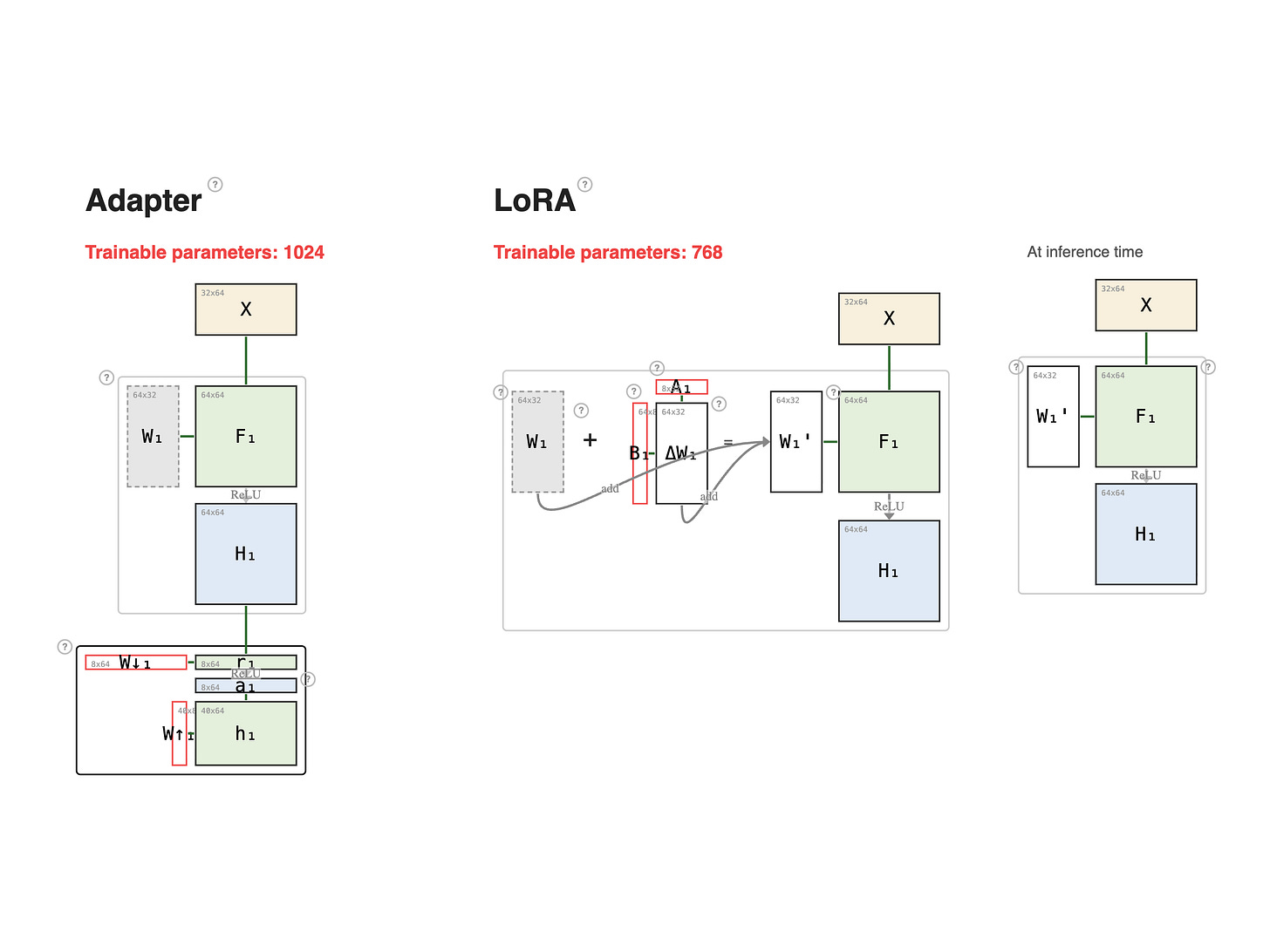

Fine-tuning is how you turn a general-purpose pretrained model into something that actually does your task — and getting it right means knowing which weights to update.

Fittingly, this series is itself a fine-tune: you bring what you already know about basic MLP neural networks, and each lesson specializes that foundation into one fine-tuning technique.

I teach this the way I teach my master's students — through higher-education metaphors. The pretrained network is someone who's finished their bachelor's.

Each of the eight lessons below shows one way to specialize further — retake every subject, refresh just the advanced course, add a certificate, pursue a PhD, invite a private tutor.

Pick any lesson. They form a sequence, but each stands on its own.

Fine-Tuning Series:

Hello sir I'm developing a new math framework called Humble Systems Theory with applications in RL can you take a look at it please 🙏

Dear Professor Tom, can we get recording access to your recent Gemma 4 seminar. I tried to look for it on the frontiers page - but couldn't find it. Or is it there, apologies it its there - I couldn't locate it.